NXP hosted the first HoverGames Challenge: Fight Fire with Flyers contest in May 2019. The contest attracted drone developers from around the world (see map below) with different backgrounds to come up with creative ways firefighters could use drones to protect and save lives. Contestants had to submit their project to Hacksters.io and were judged on general documentation, video and photos, code & contributions, inventiveness, and technical depth.

Each contestant started with a HoverGames drone development kit (KIT-HGDRONEK66) as a drone platform. This professional developer kit provides the RDDRONE-FMUK66 flight management unit, BLDC brushless motors, ESCs motor controllers, propellers, RC remote controller, carbon fiber frame, and miscellanous cables, screws, and tools. This flight management unit is supported by our PX4 flight stack.

We have selected some of our favourite projects from the contest to share with the PX4 community.

Let’s first look at some of the choices of hardware these developers used.

| Projects | Companion Computer of Choice | Sensors Used for Detection |

| A video warning HoverGames drone to fight with the fire |

Raspberry Pi 3 Model B+ Arduino Nano R3 |

Raspberry Pi Camera Module |

| ML Fire Class Analysis | Espressif ESP32S |

Pixy2 Vision Sensor Seeed Grove – Multichannel Gas Sensor SparkFun Triad Spectroscopy Sensor – AS7265x |

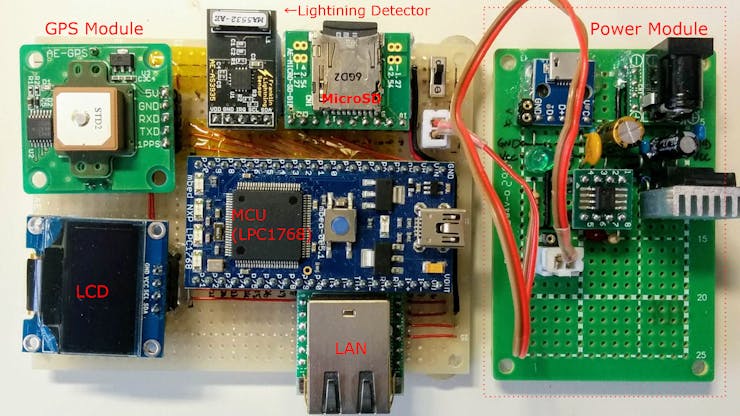

| Wildfire Scouting System | NXP Arm Mbed LPC1768 Board |

Pixy2 Vision Sensor SparkFun AS3935 Lightning Detector Melexis MLX90614 infrared thermometer |

| HoverGames: Air Strategist Companion | Raspberry Pi 3 Model B+ |

Raspberry NoIR camera V2.0 Melexis MLX90614 infrared thermometer |

| Autonomous AI Assessment & Communications Platform | Google Coral Dev Board |

Google Coral Dev Board Camera Google Coral Environmental Sensor Board Seeed Grove O2, Gas, CO2 Sensors. Seeed Grove TF Mini Lidar Pixy2 Vision Sensor |

Now let’s take a look at some of the winning projects:

A Video Warning HoverGames Drone to Fight with the Fire

1st Place Award

By Dobrea Dan Marius from Iași, Romania

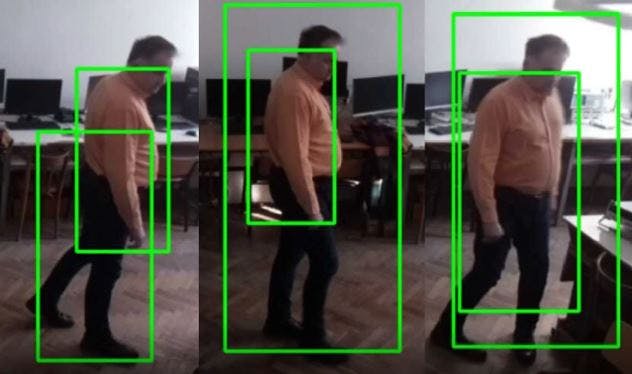

One of the main goals of firefighters is to prevent human death. The goal of this project is to detect humans in a fire using a real-time drone technology system to assist with search and rescue.

A machine learning model was trained to detect humans. Detailed instruction on how the model was trained is provided on the project hackster page. Dobrea has found that the human detection performances are excellent, however, to optimize this human detection model, it is best to replace the Raspberry pi system on board with a more powerful system.

To assist with the search and rescue, an obstacle avoid system was developed using four HRLV-MaxSonar-EZ sensors and a Nano Arduino. Three were mounted in the front, and one was mounted in the rear.

ML Fire Class Analysis

2nd Place Award

By AK

Finding the source of a fire is often difficult but very beneficial, but once the source and properties of the fire is known, the most efficient fire suppression method can be determined to tackle the incident.

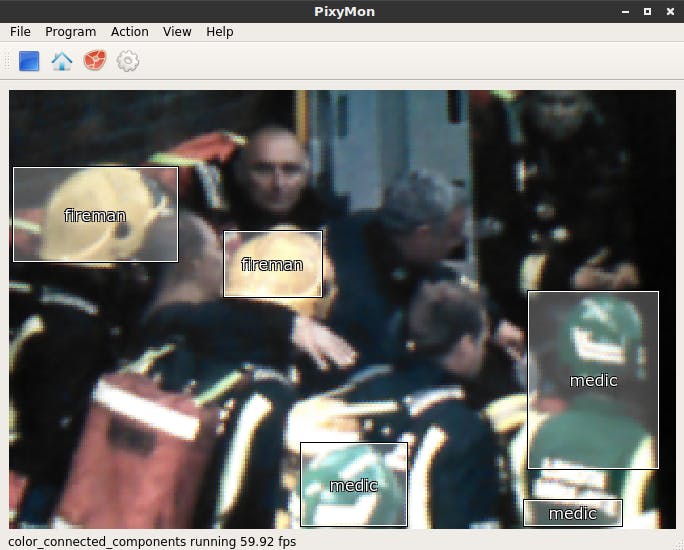

AK created a drone to take on this challenging task. The drone is equipped with multiple gas sensors, spectroscopy sensor, and a Pixy2 vision sensor to determine the presence of first responders and the fire class with the help of machine learning.

Various materials were burned and data were collected using the gas sensors and spectroscopy sensor. A machine learning model was created with this information in order to determine the fire class.

Due to the fact that some of the fire suppression methods are unsuitable when people and thus first responders are present. A Pixy2 Vision Sensor was used to find out if a first responder is present by looking at highly visible colors of hard hats.

Once the fire class and the presence of a crew is determined, it can help decide the best fire extinguishing agent to use in the fire.

Wildfire Scouting System

3rd Place Award

By Tatsuya Iwai from Sapporo, Japan

Forest fires always start by either naturally caused or human caused, and natural caused fires are mainly started by lightning. It requires a lot of effort to monitor such potential fire risks by humans because lightning can happen anywhere and anytime. Tatsuya’s Wildfire Scouting System aims to solve this issue.

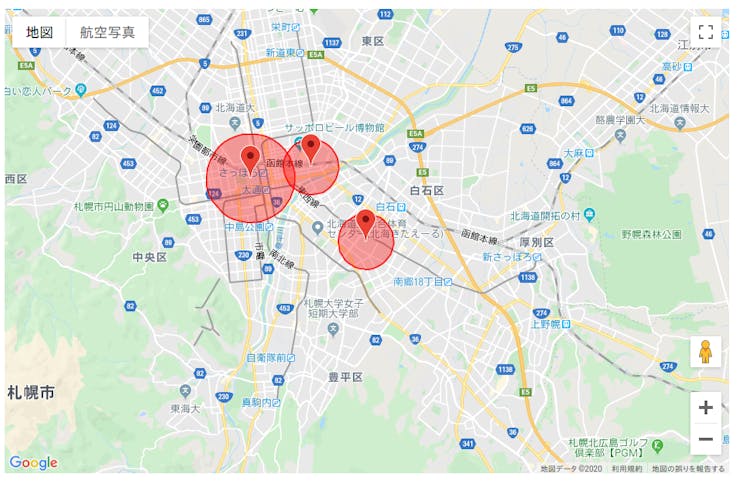

A lightning detection system was created with a AS3935 module, which can measure the distance of lightning up to 40km away with an accuracy of 1km, and the scale of lighting as well. This system includes a GPS module, which provides accurate location and timestamp for logging lightnings. Once the system detects lighting, it will send information of lighting (what time, how far, how strong) and location of device to the repository server via Internet.

A mapping system was then created to display when a lightning detected, and show how far from the location by its circle. Using multiple lightning detection systems, one can predict the location of lightning by the distance & strength of lightning, and GPS location of the detectors.

Tatsuya found that Pixy2 vision sensor had a poor fire detection performance because it uses a hue-based color filtering algorithm to detect objects, and actual fire contains strong brightness but weak hue.

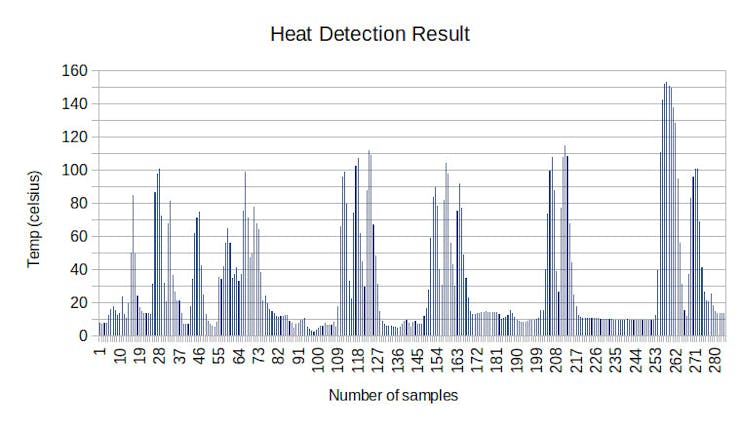

A Melexis MLX90614 infrared thermometer was chosen to detect the presence of fire. Testing was done on burning charcoal from 60cm distance away. This sensor performed well but the value dropped quickly when the sensor was moved away from the charcoal, therefore, the drone has to fly close to the fire for optimum detection.

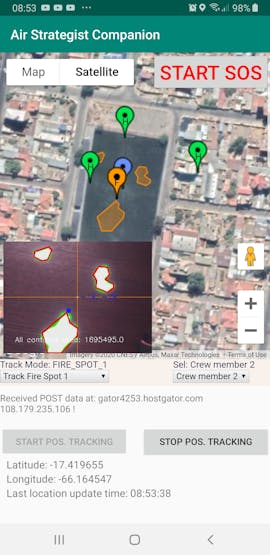

HoverGames: Air Strategist Companion

PX4 Award

By Raul Alvarez-Torrico From Bolivia

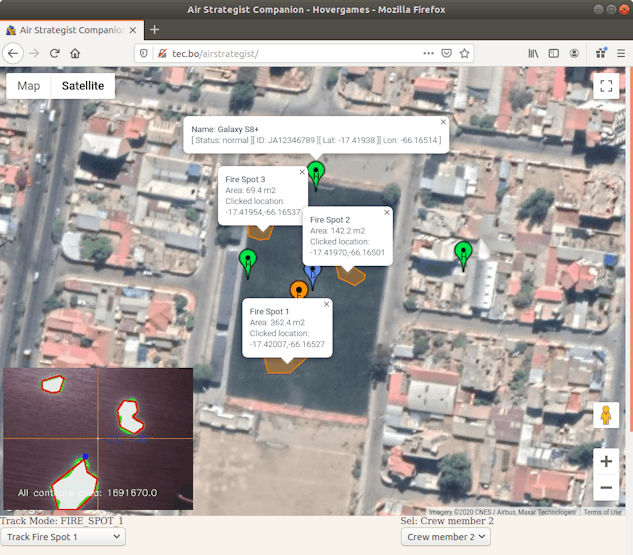

Raul Alvarez-Torrico created a system composed of a quadrotor drone, a web server and an Android application designed for the purpose of enhancing situational awareness for forest firefighting crews.

The system tracks fire spots and members of the firefighting crew in real-time, and displays their locations on an online map that can be accessed from a web browser and also from the Android Application. The drone flies over the fire area and uses an infrared camera with computer vision to identify fire spots autonomously. The drone will track the fire and relocate itself over it without human intervention.

Raul created a custom developed Android application intended to be installed in the firefighting crew members’ mobile devices. The application tracks the device’s GPS coordinates and uploads them to the web server. The position of all crew members detected by the system is visualized with markers on the online map, along with their names, ID codes, GPS coordinates and current status.

At the command center, the personnel in charge can monitor all the information made available by the system, and at the same time control the drone’s autonomous tracking task modes, all from the same web page.

The code related to controlling the drone was developed with the use of simulation. The PX4 Gazebo ‘Software in The Loop’ (SITL) simulator was used, running on an Ubuntu 18.04 PC with ROS melodic installed. The system was extensively tested in simulation and performed very well. Extensive field testes with the real quadcopter has not been performed yet.

Autonomous AI Assessment & Communications Platform

First Responders Award

By Jim Ewing from Boston, Massachusetts, United States

Jim Ewing created an AI enhanced drone assisting firefighters on-the-ground and control centers via IR & HD streams and gas sensor data.

Jim chose to use Google Coral Dev Board as a companion computer in this project. The Tensorflow Processing Unit on the Coral Dev Board was trained using the Pascal Visual Object Classes (VOC) data set found at http://host.robots.ox.ac.uk/pascal/VOC/index.html to recognize objects and people. The system recognizes and counts the number of human beings it sees via the high-def Coral Dev camera.

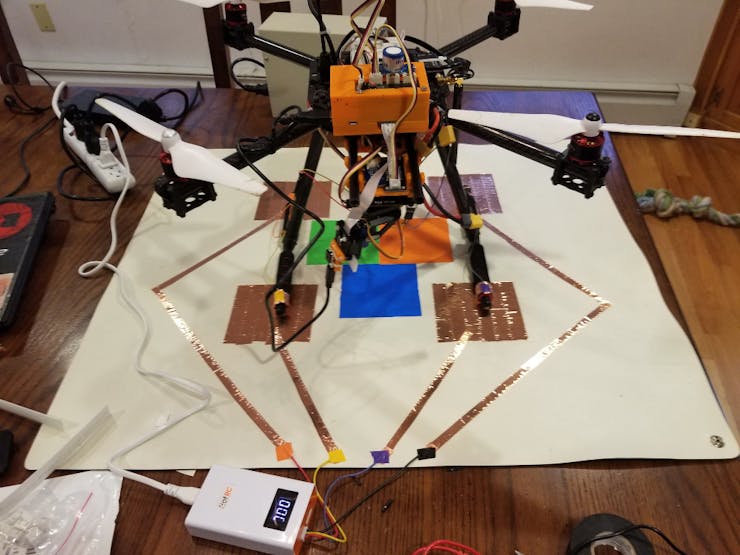

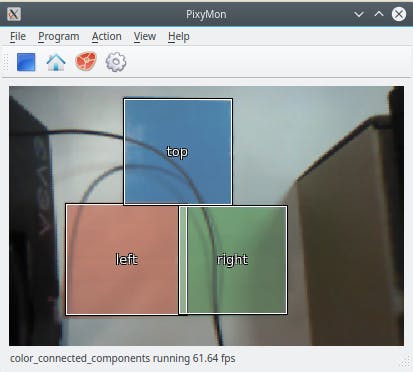

This project incorporated a landing pad and used camera vision to land onto the pad. A Pixy2 Vision Sensor was trained to recognize the blocks on the landing pad with labels “Top”, “Right” and “Left”. There were also 4 charging pads on the landing pad, and these charging pads are connected to the battery wires for automatic charging.

This drone also has multiple sensors that detects temperature, humidity, barometric pressure, O2 level, CO2 level, Volatile Organic Compounds levels, as well as surrounding light intensity in lux. A webpage was created to display these data along with a camera stream. This information can be very helpful for firefighters both on the ground and at the command center.